In September 2015, we have released a brand new Collective Knowledge framework for collaborative, systematic and reproducible computer system's research, and moved all further developments to GitHub: src, docs

This page is not updated since summer 2015 - see this wiki instead!

Collective Mind

towards collaborative, systematic and reproducible computer engineering

|

We are a group of researchers working with the community on a new methodology, infrastructure and repository to enable collaborative and reproducible research and experimentation in computer engineering as a side effect of our projects on combining performance/energy/size auto-tuning with run-time adaptation, crowdsourcing, big data and predictive analytics (see our manifesto and history). Our approach, in turn, helped to enable new publication model where all research material (code and data artifacts) is shared along with articles to be continuously discussed, validated and improved by the community! To evangelize this community-driven approach and set up an example, we started releasing all our benchmarks, data sets, predictive models and tools with unified interfaces since 2007 at cTuning.org and later at c-mind.org/repo. We use ADAPT workshop on self-tuning computing systems to validate our new research and publication model. We hope that it can complement well recent academic initiatives on reproducible research at major conferences while focusing more on technological aspects of collaborative and reproducible research in computer engineering (rather than just sharing and validating artifacts). This R&D is supported by the cTuning foundation. |

Contents

Our long term vision

With the rapid advances in information technology and all other fields of science comes dramatic growth in the amount of processing data ("big data"). Scientists, engineers and students are drowning in experimental data and often have to divert their research path towards data management, mining, and visualization. Such approaches often require additional interdisciplinary skills including statistical analysis, machine learning, programming and parallelization, database management, and Internet technologies, which still few researchers have or can afford to learn in parallel with their main research work. Multiple frameworks, languages and public data repositories started appearing recently to enable collaborative data analysis and processing but they are often either covering very narrow research topics and too simplistic (just data and code sharing) or very formal and still require special programming skills often including Object Oriented Programming.

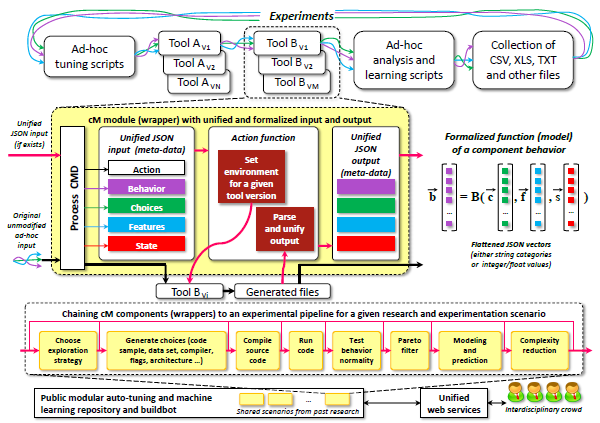

Collective Mind technology (cM) attempts to fill in this gap by providing researchers and companies a simple, portable, technology-neutral and practically transparent way to gradually systematize and classify all their data, code and tools. Open source cM framework and repository fully relies on customizable public or private plugins (mostly written in python with support of any other language through OpenME interface) to gradually describe and classify similar data and code objects, or abstract interfaces of ever changing tools thus effectively protecting researchers' experimental setups. cM helps to easily preserve any complex research artifact (collection of files, benchmarks, codelets, datasets, tools, traces, models) with gradually and easily extensible JSON based meta description including classification, properties and either direct or semantic data connections. Furthermore, meta descriptions of all data can be transparently and easily indexed using third-party ElasticSearch enabling very fast and complex queries. At the same time, all research artifacts can be exposed to any public or workgroup user through unified web services to crowdsource experimentation, ranking, online learning and knowledge management.

cM uses agile top-down methodology originating from physics to represent any experimental scenario and gradually decompose it into connected plugins with associated data or compose it from already shared plugins similar to "research LEGO". Universal structure immediately enables replay mode for any experiment, thus making this framework suitable for recent projects on reproducibility of experimental results and new publication model where experiments and techniques are validated, ranked and improved by the community. For example, we easily moved all our past R&D on program and architecture multi-objective auto-tuning, co-design and dynamic adaptation to cM plugins and gradually make them available together with all research artifacts at http://c-mind.org/repo. We hope that cM will be useful to a broad range of researchers and companies either as an open-source, community driven solution to systematize their research and experimentation, or possibly as an intermediate step before investing into more complex or commercial knowledge management systems.

Here is our list of links to initiatives, publications, tools and techniques related to collaborative and reproducible reserarch, experimentation and development in computer engineering.

Related Collective Mind publications and presentations

- Our new open publication model proposal (2014) (ACM pdf, arXiv pdf)- it summarizes our practical experience with sharing and reviewing experimental results and research artifacts since 2007; we plan to validate it at our ADAPT'15

- GCC Summit publication (2009) - introducing our vision on reproducible research and describing cTuning.org framework for collaborative and reproducible program and architecture analysis, optimization and co-design (all code and data for machine learning based compiler MILEPOST GCC have been publicly shared at cTuning.org)

- INRIA/arXiv technical report (2013) - introducing long term Collective Mind vision; considerably updated journal version will be available in Fall 2014

- ACM TACO publication (2012) - introducing crowdtuning (crowdsourcing auto-tuning)

- IJPP publication (2011) - introducing machine learning based compiler, cTuning.org and reproducible R&D on program and architecture optimization

- Long term vision slides - "Systematizing tuning of computer systems using crowdsourcing and statistics"

- cM basics slides - "Collective Mind infrastructure and repository to crowdsource auto-tuning"

Public repository of knowledge

Do not waste your research material - use Collective Mind Framework and Repository to describe, run and share your experiments with the community!

- Beta live Collective Mind repository (3rd generation opened in 2013 substituting previous cTuning repository and infrastructure available since 2008) - we described and shared all our past research developments, codelets, benchmarks, data sets, models, statistical analysis, modeling and online learning plugins and tools to start top-down analysis and optimization of existing computer systems. We used it as the first practical example to motivate new publication model where all research artifacts are continuously shared, validated and improved by the community. After many years, it seems that community finally started moving in this direction and we even see some related initiatives in major conferences including OOPSLA and PLDI. We believe that our project and feedback from the community collected since 2006 is complementary and can help with various technological aspects of collaborative and reproducible research in computer engineering.

Common infrastructure and support tools

- Collective Mind Infrastructure (cM) - plugin-based framework and repository for collaborative, systematic and reproducible research and experimentation

- OpenME - universal and simple event-based interface to "open up" black box applications and third-party tools such as GCC, LLVM and Open64 to be able to monitor, learn and predict any fine-grain optimization decision inside through external plugins

- Alchemist - OpenME plugin to convert compilers into interactive analysis and optimization toolsets

Events

- Workshop TRUST 2014 on reproducible research methodologies and new publication models @ PLDI 2014 (Edinburgh, UK)

- Workshop REPRODUCE 2014 on reproducible research methodologies and new publication models @ HPCA 2014 (Orlando, Florida, USA)

- Panel on reproducible research methodologies and new publication models at ADAPT 2014 @ HiPEAC 2014 (Vienna, Austria)

- Thematic session on making computer engineering a science @ ACM ECRC 2013 / HiPEAC computing week 2013 (Paris, France)

- Thematic session on collective characterization, optimization and design of computer systems @ HiPEAC spring computing week 2012 (Goteborg, Sweden)

- Tutorial on Speedup-Test: Statistical Methodology to Evaluate Program Speedups and their Optimisation Techniques @ HiPEAC 2010 (Pisa, Italy)

- Tutorial on cTuning tools for collaborative and reproducible program and architecture characterization and auto-tuning @ HiPEAC computing systems week 2009 (Infineon, Munich, Germany)

- Public discussion on collaborative and reproducible analysis, design and optimization of computer systems @ GCC Summit 2009 (Montreal, Canada)

Current customized usage scenarios

Designing novel many-core computer systems becomes intolerably complex, ad-hoc, costly and error prone due to limitations of available technology, enormous number of available design and optimization choices, and complex interactions between all software and hardware components. Empirical auto-tuning combined with run-time adaptation and machine learning has been demonstrating good potential to address above challenges for more than a decade but still far from the widespread production use due to unbearably long exploration and training times, ever changing tools and their interfaces, lack of a common experimental methodology, and lack of unified mechanisms for knowledge building and exchange apart from publications where reproducibility of results is often not even considered. Since 1993, we have spent more time on preparing and analyzing huge amount of heterogeneous experiments for self-tuning machine-learning based computer systems or trying to validate and reproduce others research results rather than on exending our novel ideas.

In 2007, we decided to start collaborative systematization and unification of design and optimization of computer systems combined with a new publication model where experimental results are validated by the community. One of the possible promising solutions is to combine public repository of knowledge with online auto-tuning, machine learning and crowdsourcing techniques where HiPEAC and cTuning communities already have a good practical experience. Such collaborative approach should allow community to continuously validate, systematize and improve collective knowledge about computer systems, and extrapolate it to build faster, more power efficient and reliable computer systems. It can also help to restore the attractiveness of computer engineering making it a more systematic and rigorous discipline rather than "hacking".

We develop cTuning collaborative research and development infrastructure and repository (current version is cTuning3 aka Collective Mind) that enables:

- gradual decomposition and parametrization of complex computer systems and experiments into unified and inter-connected Collective Mind modules (components or plugins) with extensible meta-information

- easy co-existance of multiple versions of tools and libraries

- implementation of experimental pipelines with all related artifacts necessary for collaborative and reproducible research and experimentation

- collection and sharing of statistics, benchmarks, codelets, tools, data sets and predictive models from the community

- systematizaton of optimization, design space exploration and run-time adaptation techniques (co-design and auto-tuning)

- collaborative evaluation and improvement of various data mining, classification and predictive modeling techniques for off-line and on-line auto-tuning

- new publication model (workshops, conferences, journals) with validation of experimental results by the community

Current cM version includes public benchmarks, datasets, tools, techniques and some stats from past Grigori Fursin's research:

- support for most OSes and platforms (Linux, Android, Windows; servers, cloud nodes, mobiles, laptops, tablets, supercomputers)

- multiple benchmarks (cBench, polybench, SPEC95,SPEC2000,SPEC2006,EEMBC,etc), hundreds of MILEPOST/CAPS codelets, thosands of cBench datasets

- multiple compilers (GCC, LLVM, Open64, PathScale, Intel, IBM, PGI)

- tools for program and architecture characterization (MILEPOST GCC for semantic features and code patterns; hardware counters for dynamic analysis)

- plugins for powerful visualization and data export in various formats

- experimental pipeline for universal program and architecture co-design, auto-tuning, performance/energy modeling and machine learning

- OpenME interface to instrument programs or statically enable adaptive binaries through multi-versioning and decision trees for run-time adaptation/scheduling while easily mixing CPU/CUDA/OpenCL codelets or any other heterogeneous programming models

- plugins for online auto-tuning and performance model building

- machine-learning enabled self-tuning cTuning CC compiler that can wrap any existing compiler while using crowd-tuning and collective knowledge to continuously improve its own behavior

- plugins for universal P2P data exchange through cM web services

- optimization statistics for various ARM, Intel and NVidia chips