cTuning.org is a non-profit educational organization and a founding member of MLCommons. Established by Grigori Fursin in 2008 and registered as the cTuning foundation in 2014, it supports the collaborative development of open-source tools for reproducibility initiatives, artifact evaluation, and open science, as well as the automation of efficient and cost-effective computer system co-design, in collaboration with ACM, IEEE, HiPEAC, and MLCommons.

You can learn more about our history, vision, community initiatives, open-source developments and other related projects from our ACM TechTalk'21, keynote at ACM REP'23, joint Nature article'23, journal article in Philosophical Transactions of the Royal Society'21 and white paper'24. Feel free to follow us on LinkedIn and X.

Our current community activities include:

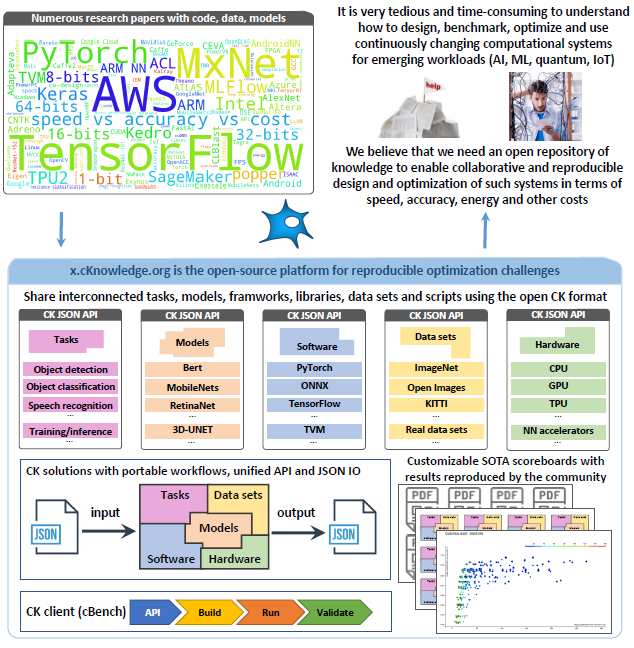

- developing Collective Knowledge Playground - an educational community project to help students, researchers and engineers learn how to run AI, ML and other emerging workloads in the most efficient and cost-effective way across diverse models, data sets, software and hardware. It is being developed by Grigori Fursin in collaboration with MLCommons, FlexAI, and our great contributors and participants in our open challenges.

- helping AI, ML and Systems conferences set up Artifact Evaluation to help researchers and engineers reproduce results from published papers and validate them in the real world across continuously changing models, data, software and hardware;

- organizing reproducibility challenges and community MLPerf submissions in collaboration with MLCommons to learn how to co-design more efficient and cost-effective software and hardware for emerging workloads.

- unifying the Artifact Appendix and reproducibility checklist across different AI, ML and Systems conferences.

- leading the development of the Collective Mind workflow automation technology at MLCommons.

We are honored that our expertise and open-source technology has helped the following initiatives:

- helped ACM develop a common methodology to reproduce research papers and set up Emerging Interest Group on Reproducibility and Replicbility;

- helped ACM and IEEE conferences organize 20+ reproducibility challanges and artifact evaluations;

- helped MLCommons establish the MLCommons Task Force on Automation and Reproducibility and develop the CM workflow automation technology to run MLPerf benchmarks out of the box on any software and hardware from the cloud to the edge using the portable and technology-agnostic Collective Knowledge v3 that we are developing with the community and MLCommons;

- helped students, researchers and practitioners learn the best practices for collaborative and reproducible research: ACM Tech Talk'21 keynote at ACM REP'23, and white paper.